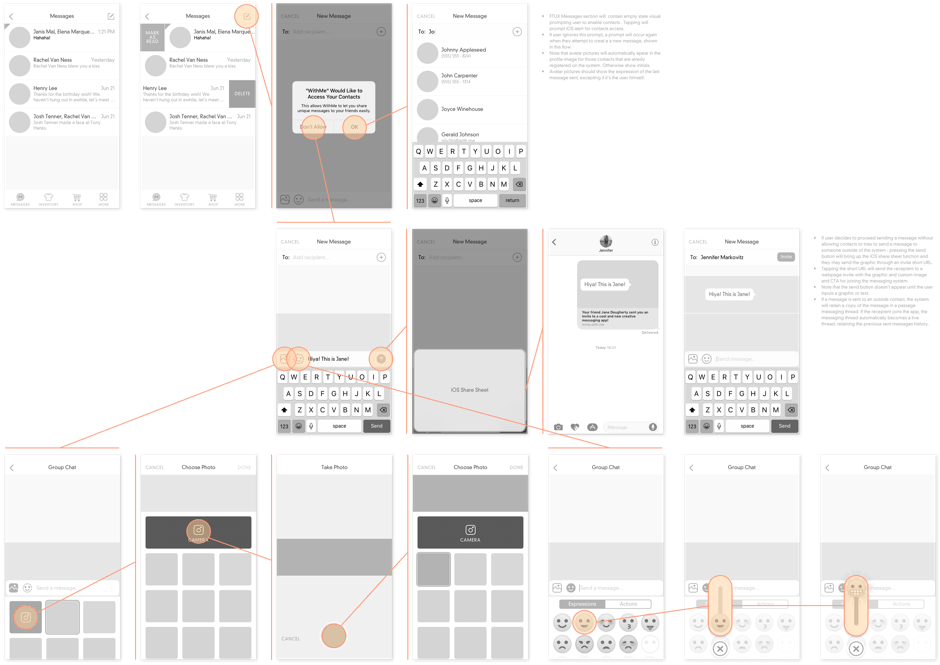

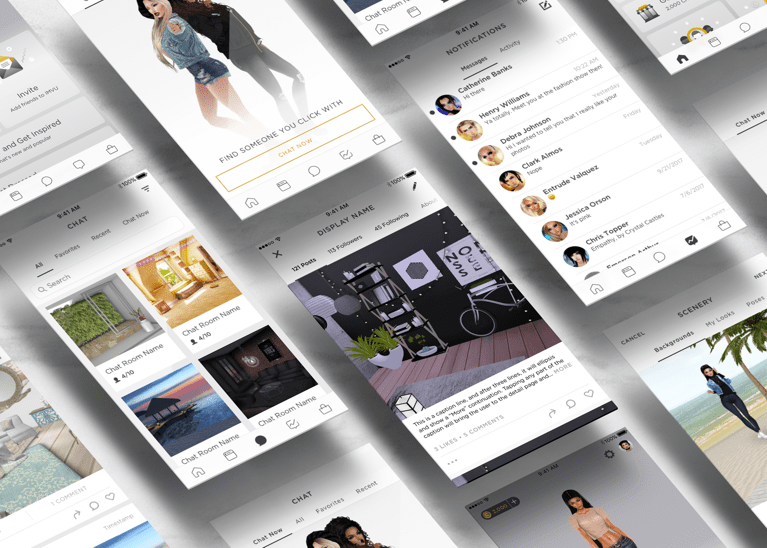

In 2014, I was part of a team to accomplish a new roadmap initiative: our mission was to resource company assets to create a highly visual, emotive, and customizable messenging app. I collaborated with another designer to create the product spec, and worked intimately with the engineers to scope the design within technical limitations.

In a span of six months, the app was built in iOS7 and shipped, and my work culminated in a design patent.

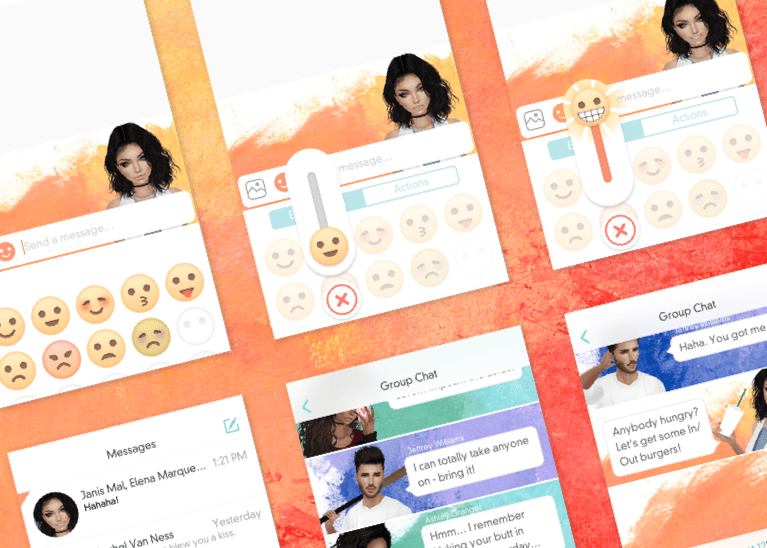

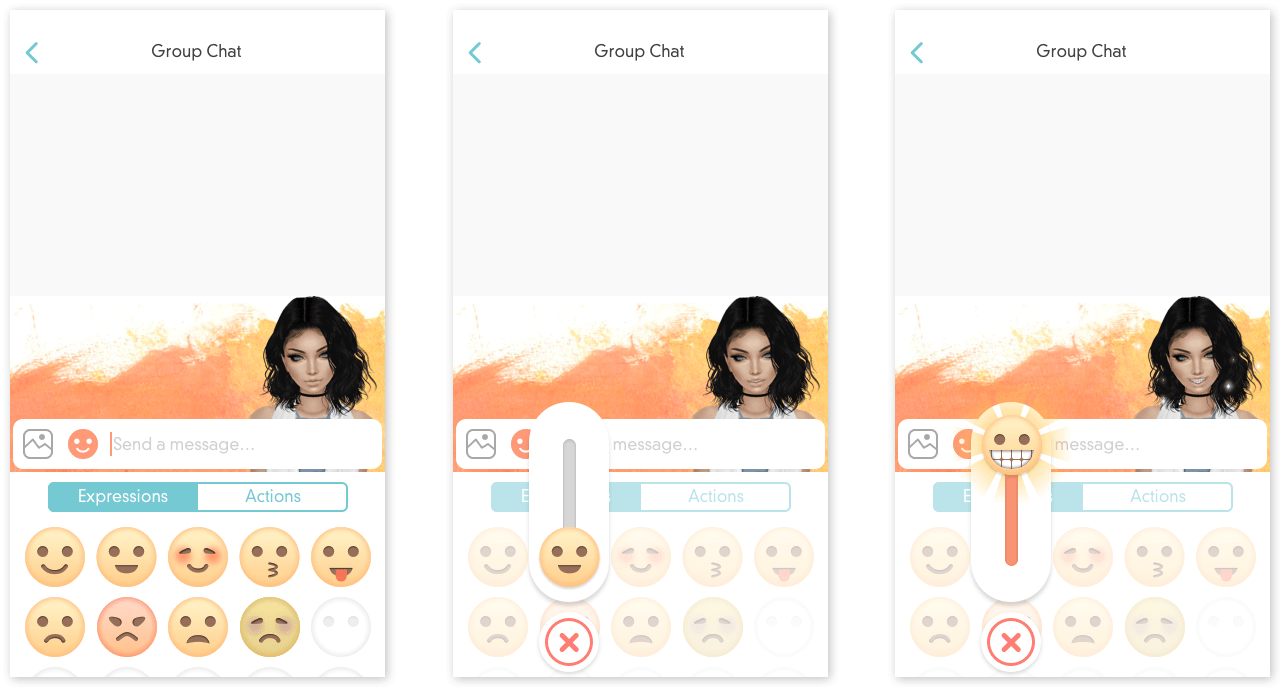

High fidelity storyboard of amplifying emoji intensity

The company at the time did not have much experience shipping mobile products, and this project was the first real foray in the mobile product space.

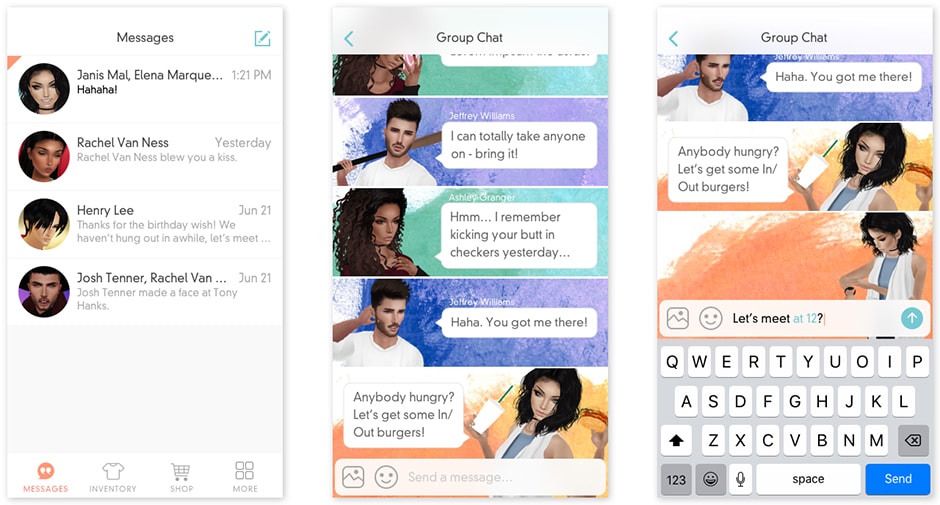

The objective was to prove a viable mobile product using company assets, specifically the 3D avatars. We considered the product benefits, and proposed that an immersive chat app would be most applicable given the scope.

Asynchronous chat allowed for a lightweight experience that was easily consumable on mobile devices, and by offering a more immersive experience in the form of customizable "emojis", the feature set would stand out from the rest of the competition.

There were a lot of limitations that had to be considered. Morph nodes were not built into the head meshes of the avatars, which meant that we could not exagerrate facial expressions. The head shapes were unable to host interchangeable parts (i.e. swapping different noses, mouths), which drastically limited the styling of the face.

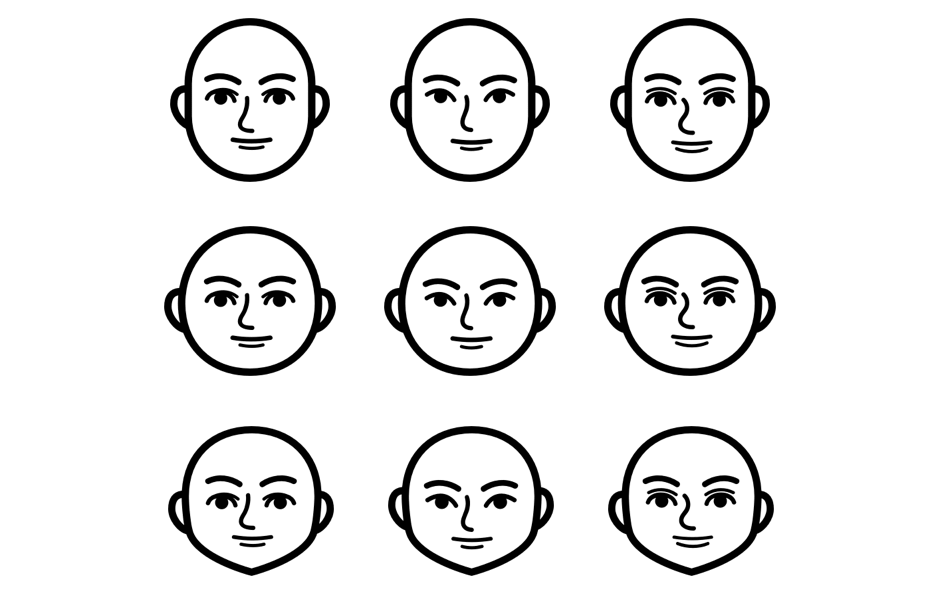

Basic matrix of facial types (icons courtesy of Amy Liu)

Our solution was to express the emotion through bodily animation and effects, and not heavily rely on facial cues (which given the small screen real estate on mobile, facial nuances would have been overlooked regardless). Customization limitations were trickier to handle; eventually we had to resort to creating 9 different permutations of head shapes, using a very basic matrix of facial features: eyes, mouth, nose, multiplied by three head shapes. Common sense and user research showed that while users did not feel that the provided faces were wholly representative of themselves, it did nevertheless lend enough control to feel viably customizable as an MVP.